Apache Kafka: What You Need to Know Before Adopting Event Streaming

A practical guide to Apache Kafka: when to use it, how it works under the hood, and how to implement producers and consumers in Node.js.

Your system has grown and now that endpoint that used to be simple needs to notify 5 different services, send emails, update cache, and fire analytics. If you're queueing all of that in a single synchronous request, I have bad news: it won't scale. That's where Kafka comes in.

Mental model: Think of Kafka as a ship's captain's journal, an append-only log where every reader walks through the pages at their own pace, and the entries never disappear. A traditional queue, by contrast, is a trash can: once someone reads the message, it's gone. Hold this image. Half of Kafka's design decisions stop being weird the moment you do.

Why Kafka and Not a Simple Queue?

A lot of people confuse Kafka with a message queue like RabbitMQ or SQS. But Kafka is fundamentally different:

- RabbitMQ/SQS: the message is consumed and disappears. One consumer per message.

- Kafka: the message is persisted in a log. Multiple consumers can read the same message, each at their own pace.

This changes everything. With Kafka, you have a distributed immutable log that serves as the source of truth for your system's events.

War story: Kafka was born at LinkedIn around 2010 because their activity feed pipeline was melting under the weight of "who viewed your profile" type events. Today Netflix pushes billions of events per day through Kafka for telemetry and recommendations, and Uber's trip pipeline runs on it end to end. If it scales for "every tap in every Uber on the planet," it'll probably survive your checkout flow.

The Concepts That Matter

Topics and Partitions

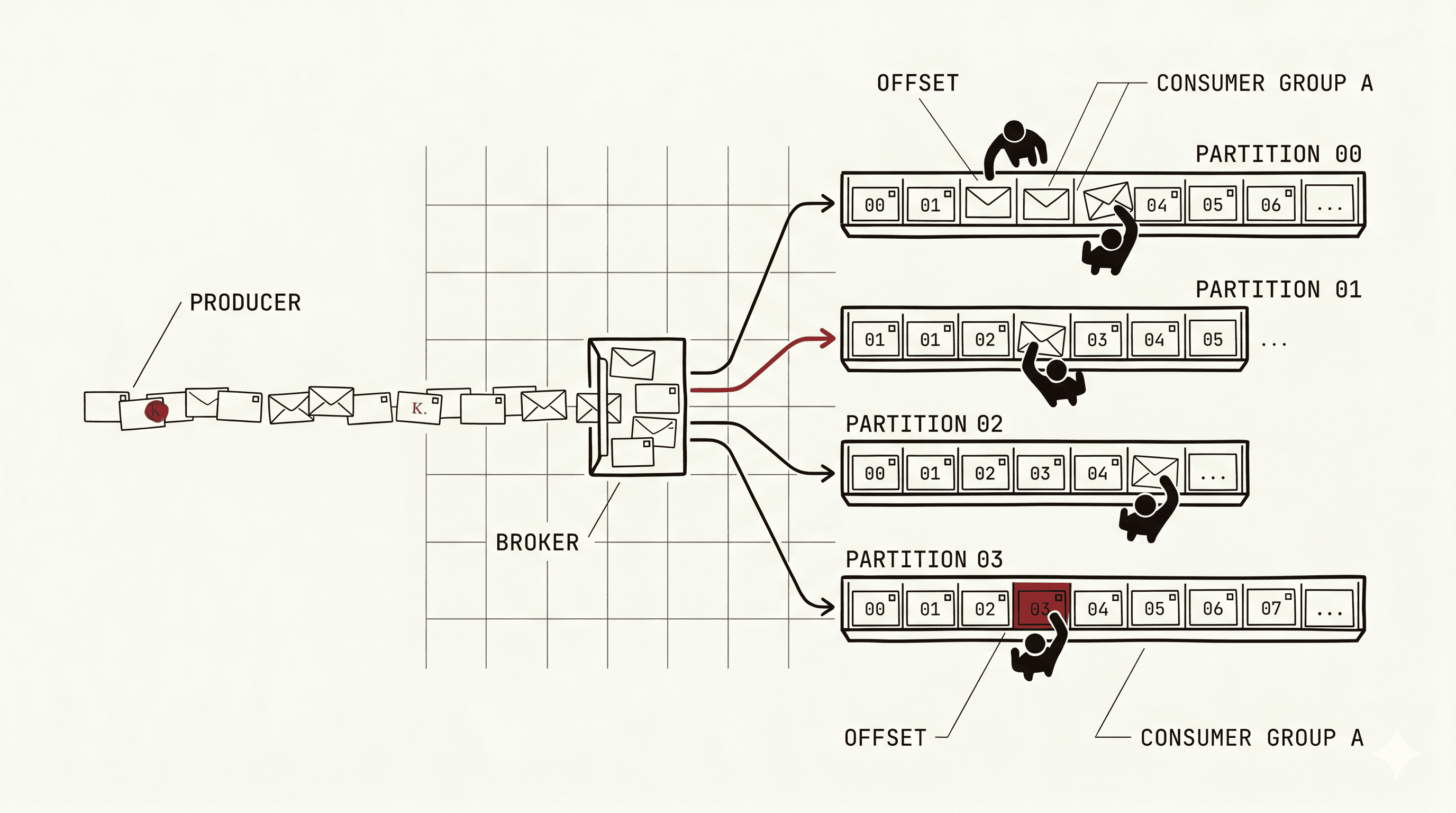

A topic is like a category of events (e.g., orders, payments, user-events). Each topic is divided into partitions, and that's what allows Kafka to scale horizontally.

Topic: orders

├── Partition 0: [msg1, msg4, msg7, ...]

├── Partition 1: [msg2, msg5, msg8, ...]

└── Partition 2: [msg3, msg6, msg9, ...]Each message within a partition has an offset -- a sequential number that identifies its position. Consumers use this offset to know where they left off.

Consumer Groups

Here's where Kafka's scalability magic lies. When multiple consumers belong to the same consumer group, Kafka distributes the partitions among them:

Consumer Group: order-processor

├── Consumer 1 → Partition 0, Partition 1

└── Consumer 2 → Partition 2Each partition is consumed by only one consumer in the group. Want to process faster? Add more consumers (up to the number of partitions).

Replication and Durability

Kafka replicates each partition across multiple brokers. If one broker dies, another takes over. This is configured by the replication factor -- in production, use at least 3.

KRaft: Bye-Bye ZooKeeper

Quick 2026 update: if you read older Kafka tutorials, you'll see ZooKeeper everywhere, the external coordinator that managed brokers, leader elections, and metadata. Kafka 4.0 (2025) removed ZooKeeper entirely. Now it's KRaft mode (Kafka Raft) only, with metadata managed by the brokers themselves through a Raft consensus quorum. Translation: one less moving part to operate, faster failovers, and simpler deployments. If you're starting today, you're already on KRaft and should never look back.

Implementing in Practice with Node.js

Producer

Important tip: use the key strategically. Messages with the same key always go to the same partition, ensuring ordering.

Consumer

Watch Out: Your Consumer Must Be Idempotent

Kafka guarantees at-least-once delivery by default. This means your message can be processed more than once (in case of a crash and reprocessing). Your consumer MUST be idempotent.

The most common strategy: a deduplication table.

When to Use (and When NOT to Use) Kafka

Use Kafka when:

- You need event sourcing or event-driven architecture

- Multiple consumers need to react to the same event

- High volume of events (thousands per second)

- You need event replay (reprocessing history)

- Strong decoupling between services

Do NOT use Kafka when:

- You have a simple task queue (use Redis/BullMQ)

- You need complex message routing (use RabbitMQ)

- Your volume is low and the complexity isn't justified

- You're just getting started and don't need event streaming yet

Kafka Alternatives Worth Knowing

Kafka isn't the only game in town anymore. Quick map of the landscape:

- Redpanda -- Kafka API compatible, written in C++, no JVM, no ZooKeeper ever. Drop-in replacement that's simpler to operate.

- NATS JetStream -- Lightweight, fast, great for edge and IoT. Less ecosystem than Kafka, much smaller footprint.

- AWS Kinesis -- Fully managed, tight AWS integration. Pricier at scale, fewer knobs.

- RabbitMQ Streams -- RabbitMQ's answer to Kafka, useful if you already live in Rabbit-land.

- WarpStream -- Diskless, S3-backed Kafka protocol. Cheap for high-throughput, slightly higher latency. Interesting if your bottleneck is cross-AZ bandwidth costs.

Rule of thumb: if you want the ecosystem (Connect, Streams, schema registry, every tutorial on Earth), pick Kafka. If you want simpler ops, look at Redpanda or WarpStream.

FAQ

1. Should I run Kafka myself or use managed (Confluent Cloud / AWS MSK)? Unless you have a dedicated platform team, go managed. Self-hosting Kafka is a part-time job in itself, broker tuning, rebalancing, upgrades, monitoring. Confluent Cloud and MSK cost more on paper but cheaper than burning two engineers.

2. How many partitions is "too many"? Rule of thumb: stay under ~4,000 partitions per broker and ~200,000 per cluster. More partitions means more parallelism but also more overhead, longer rebalances, and higher end-to-end latency. Start small (6 to 12 per topic), measure, then grow.

3. Retention: can I just keep everything forever? Technically yes, with tiered storage (hot data on disk, cold on S3) it's actually viable now. But ask yourself why. If you need event sourcing as source of truth, sure. Otherwise, set sane retention (7 to 30 days) and offload to a data lake for long-term analytics.

4. Is exactly-once semantics actually real? Yes, but with asterisks. Kafka's transactional producer plus idempotent consumer gives true exactly-once within Kafka. The moment you write to an external database or call an API, you're back to at-least-once unless you implement idempotency yourself. That dedup table from earlier? Still mandatory.

5. Is Schema Registry worth the trouble? For anything beyond a toy project, yes. Avro or Protobuf with a schema registry catches breaking changes at publish time instead of at 3am in production. The 30 minutes of setup pays for itself the first time someone tries to rename a field.

Key Takeaways

Kafka is powerful, but it's not a silver bullet. It shines when you need a distributed event log that multiple services consume independently.

Before adopting it, ask yourself: "Do I really need event streaming, or would a simple queue do the job?" If the answer is event streaming, Kafka is probably the best choice. If it's a task queue, look at simpler solutions first.

And remember: idempotent consumers are not optional. That's what will save you when (not if) something goes wrong.