Async Processing: How to Stop Blocking Your API with Heavy Tasks

Learn how to decouple heavy tasks from your API using queues, workers, and event-driven architecture. Practical examples with BullMQ, Redis, and Node.js.

Your user clicks "Generate Report" and stares at a spinner for 30 seconds. The request times out. They try again. Now two reports are being generated simultaneously and your server is crying. If this sounds familiar, you need async processing.

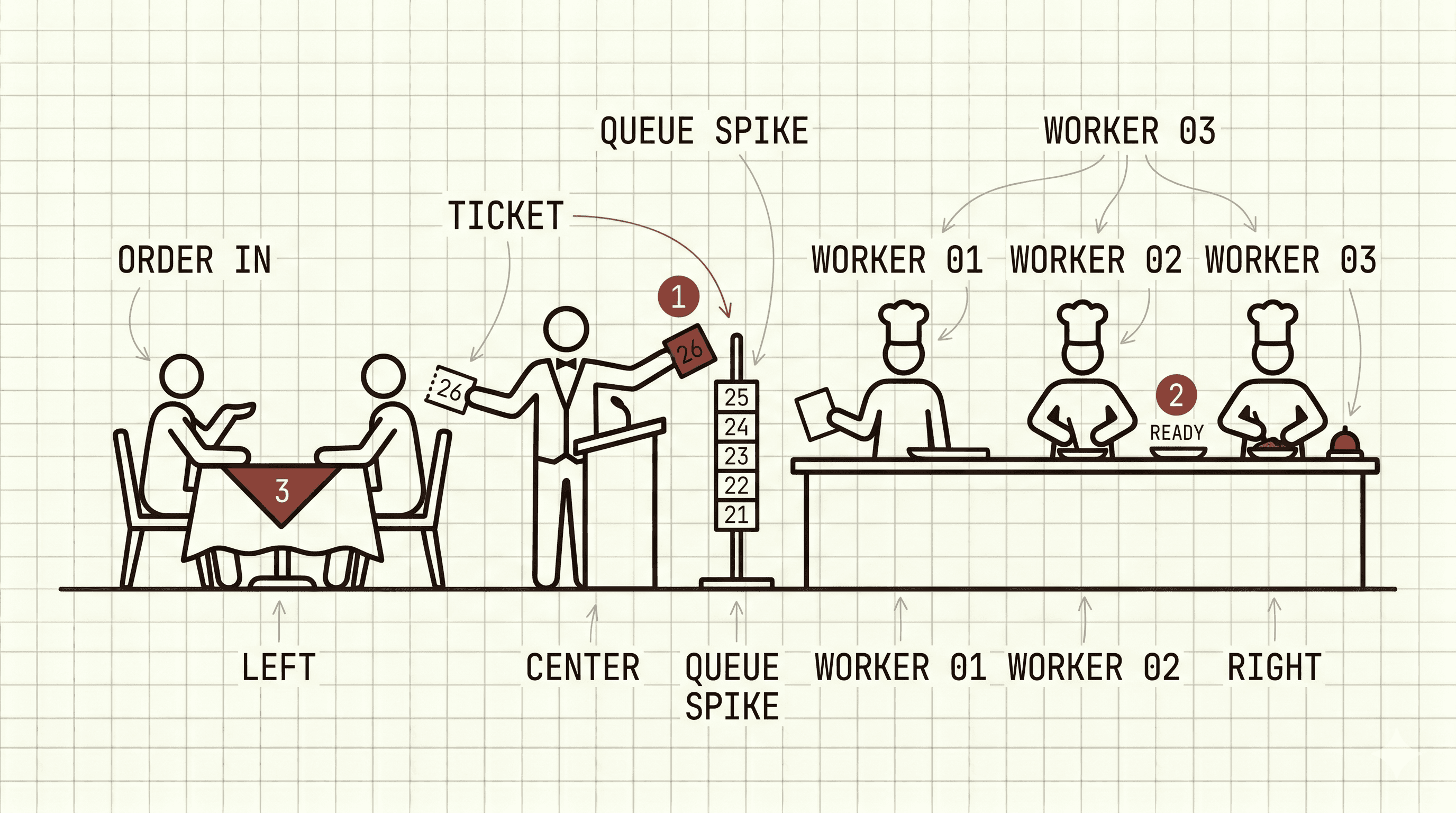

Mental model: A sync API is the barista who takes your order AND makes your coffee while you wait at the counter, holding up the entire line. An async API is the barista who takes your order, hands you a buzzer, and calls you when it's ready. Same coffee, but now ten other people got served too.

The Problem: Everything in the Same Request

The most common (and most problematic) pattern is doing everything synchronously inside the API handler:

4 seconds for a single request. And if any step fails, the entire order fails. This doesn't scale.

The Solution: Accept, Confirm, and Process Later

The principle is simple: your API should only do what's essential (create the order and process the payment) and delegate the rest to async processing.

Think of a restaurant kitchen. The waiter doesn't cook your steak. They write a ticket, slap it on the rail, and the line cooks pick it up. Your API is the waiter. The workers are the kitchen.

War Story: Shopify and the Black Friday Queue

On Black Friday, Shopify processes millions of orders per hour. They don't send confirmation emails inline. They don't update inventory inline. Every non-critical side effect goes into a queue. Why? Because Mailgun can hiccup for 30 seconds and you don't want your entire checkout to go down because an email provider is slow. Stripe does the same with webhooks: they retry with exponential backoff for up to 3 days. The lesson is brutal and simple: if a downstream dependency can fail, it will fail, and it will fail during your biggest traffic spike. Queues are how you decouple "customer got their order" from "everyone else found out about it."

BullMQ + Redis: Queues in Node.js

For Node.js, BullMQ is the most mature solution for job queues:

And the worker that processes them:

Idempotency is non-negotiable. With

attempts: 3, your job WILL run more than once sometimes. Network blips, worker crashes mid-job, ambiguous failures. IfsendEmailisn't idempotent, your user gets three confirmation emails and thinks you're spam. Use an idempotency key per job (theorderIdworks great) and check "did I already do this?" before doing it. Stripe built an empire on this principle.

BullMQ vs Alternatives

Pick your poison based on stack and scale:

| Tool | Stack | Best for |

|---|---|---|

| BullMQ | Node.js + Redis | Most Node apps. Solid, simple, battle-tested. |

| Sidekiq | Ruby + Redis | Rails shops. The OG that inspired BullMQ. |

| Celery | Python + Redis/RabbitMQ | Django/Flask. Heavier but feature-rich. |

| AWS SQS | Any + AWS | Managed, cheap, infinite scale. No scheduling, no priorities out of the box. |

| Inngest | Any (SaaS) | Serverless-friendly. Durable workflows, great DX, pay per event. |

| Trigger.dev | Any (SaaS/self-host) | Long-running jobs, observability built-in. |

| Temporal | Any | Complex, multi-step workflows. Overkill for "send an email," perfect for orchestrating a 30-step order saga. |

Rule of thumb: start with BullMQ if you're on Node. Reach for Inngest/Trigger.dev if you're serverless-first. Reach for Temporal when your "job" is actually a workflow with 12 steps and human approvals.

Async Processing Patterns

1. Fire and Forget

Queue it and forget about it. Good for analytics, logs, non-critical notifications.

2. Async Request-Reply

The client receives an ID and can check the status later:

3. Event-Driven

Publish events and let whoever wants to react. Think Twitter's fan-out: one tweet triggers a queue job that writes to millions of follower timelines, asynchronously. The user who tweeted doesn't wait.

Scheduled and Recurring Jobs

BullMQ also supports scheduling:

Dead Letter Queue: When Everything Goes Wrong

After N failed attempts, move the job to a DLQ. Think of it as the "lost and found" of your system: jobs that tried, failed, and now need a human to take a look.

Monitoring Your Queues

Don't put queues in production without monitoring. At minimum, track:

- Queue depth: how many jobs are waiting

- Processing time: how long each job takes

- Failure rate: rate of failures

- DLQ size: how many jobs have permanently failed

BullMQ has Bull Board for a web dashboard:

FAQ

Do priority queues actually work?

Yes, BullMQ supports priority on add(). Lower number = higher priority. Use sparingly. If everything is priority 1, nothing is. Good for "premium customer emails go first," bad for fine-grained ordering.

My queue depth is way bigger than my worker throughput. Am I cooked? Depends. A queue that drains during off-peak is healthy backpressure. A queue that grows monotonically is a fire. Alert on "queue depth trending up for N minutes," not on absolute size.

Can I run BullMQ on Vercel or other serverless?

Queues yes (just add() from your handler). Workers no, because serverless functions die after a few seconds. Run workers on Render/Fly/Railway/a small VPS, or switch to Inngest/Trigger.dev which are designed for serverless from day one.

If Redis dies, do I lose my jobs?

Only what wasn't persisted. Enable Redis AOF with appendfsync everysec and you lose at most one second of jobs on a crash. For stronger guarantees, use Redis with replication or a managed provider (Upstash, Elasticache). And remember: jobs being processed when Redis dies come back as "stalled" and get retried, which again means idempotency.

How do I handle workers in a monorepo without losing my mind? Keep job definitions (name + payload type) in a shared package both the API and the worker import. Workers get their own app/package with their own Dockerfile and deploy pipeline. Never let the API and workers share a process in production, even if it's tempting in dev.

Key Takeaways

If your API is slow because it does too much in a single request, async processing is the answer. The rule is simple: respond with the essentials for the user and delegate the rest.

Start with BullMQ + Redis for Node.js. It's simple to set up, has automatic retries, and scales well. For more complex scenarios (multiple consumers, event streaming), evolve to Kafka.

And never forget: monitor your queues. A queue that grows unchecked is a ticking time bomb.