Load Balancing: How to Distribute Traffic and Scale Your Application

Understand load balancing algorithms, compare Nginx vs HAProxy vs cloud load balancers, and learn how to scale your application horizontally.

Thiago Saraiva

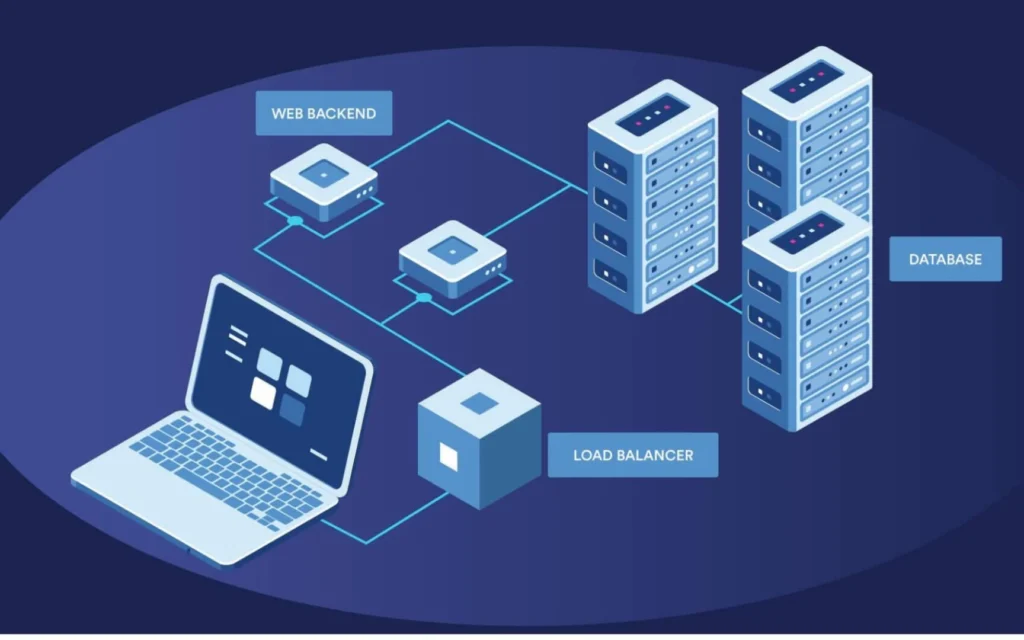

Your single server is at its limit. CPU pinned at 90%, responses crawling, users complaining. You can try vertical scaling (bigger machine), but that hits a ceiling and burns money fast. The alternative? Add more servers and spread the traffic across them. That's load balancing.

Mental model: A load balancer is the traffic cop at a busy intersection, waving cars into whichever lane is moving fastest. Or, if you prefer a fancier metaphor: the maître d' at a packed restaurant, seating each new party at the table that can serve them soonest. Your servers are the lanes (or tables). The cop doesn't cook the food. He just decides who goes where.

The Basics: Why Load Balancing?

Without a load balancer, a single server is a single point of failure. If it goes down, everything goes down.

With a load balancer, traffic is distributed across multiple servers. If one dies, the others absorb the load:

Clients --> Load Balancer --> [Server 1, Server 2, Server 3]

Immediate benefits:

- Horizontal scalability: add servers on demand

- High availability: if one server fails, traffic flows to the others

- Zero-downtime deploys: drain a server from the pool, deploy, bring it back

War story: GitHub spent years fighting edge routing pain before standardizing on a heavily customized HAProxy setup, which they detailed publicly around 2015. A single tuned HAProxy box was routing Git, SSH, and HTTPS traffic across backend pools at serious throughput. Around the same era, WhatsApp became the canonical example of brutal efficiency: a tiny engineering team, FreeBSD plus Erlang, and famously around two million TCP connections per server in their 2012 benchmarks. The LB layer isn't glamorous, but it's the reason your architecture doesn't fold on launch day.

Layer 4 vs Layer 7: The Difference That Matters

Layer 4 (Transport): routes based only on IP and port. Very fast, but blind to HTTP content. Examples: AWS NLB, HAProxy in TCP mode.

Layer 7 (Application): inspects HTTP content (URL, headers, cookies). Slower, but far more flexible. Examples: AWS ALB, Nginx, HAProxy in HTTP mode.

For most web applications, you want Layer 7. Reach for Layer 4 when raw network performance is non-negotiable (databases, generic TCP).

Balancing Algorithms

Round Robin

Distributes sequentially: Server A, B, C, A, B, C...

Simple and efficient when all servers are identical and requests take similar time.

Weighted Round Robin

Same idea, but with weights. A beefier server gets more requests:

Server A (8 CPUs) -> weight: 4

Server B (4 CPUs) -> weight: 2

Server C (2 CPUs) -> weight: 1Perfect when your fleet is heterogeneous, or for canary deploys (new version with a low weight).

Least Connections

Sends to the server with the fewest active connections. Ideal when request duration varies wildly (WebSockets, streaming, heavy operations).

IP Hash

The same client always lands on the same server. Useful for sticky sessions without a shared session store.

My production recommendation: Weighted Least Connections in most cases. It adapts to load and handles heterogeneous servers gracefully.

DNS Round-Robin: The Poor Man's Load Balancer

Before you spin up an ALB, know that DNS itself can "balance" traffic. Register multiple A records for the same hostname and the resolver hands clients different IPs on each query. Zero infrastructure, zero cost.

api.example.com. A 10.0.1.10

api.example.com. A 10.0.1.11

api.example.com. A 10.0.1.12But the caveats are brutal:

- No health checks: DNS will happily send users to a dead box until the TTL expires

- TTL caching: browsers and resolvers cache aggressively, so "removing" a server can take hours

- No weighting or smart routing: pure round robin by resolver, not by request

Fine for a side project. Not fine for anything with an SLA.

Anycast: Routing by Geography

For global traffic, Anycast is the next level up. The same IP is announced from multiple data centers worldwide, and BGP routes each user to the nearest one. Cloudflare, Fastly, and AWS Global Accelerator all use this to put their edge as close to the user as possible. Think of it as load balancing across continents before the request even touches your application layer.

Configuring in Nginx

Key points:

max_fails=3 fail_timeout=30s: after 3 failures, the server is removed from rotation for 30sbackup: only receives traffic if the primaries failkeepalive 32: maintains 32 persistent connections upstream (reduces latency)

Health Checks: Don't Send Traffic to Dead Servers

Health checks are non-negotiable. Without them, the load balancer happily ships requests to a server that's already on fire.

In your Node.js backend:

Always expose two endpoints:

/health/live: is the process running? (liveness)/health/ready: is it ready to receive traffic? (readiness)

In Kubernetes, this is the difference between a restart (liveness failed) and pulling the pod out of the Service endpoints (readiness failed).

Sessions: The Classic Problem

With multiple servers, where do sessions live? Three approaches:

- Sticky Sessions (IP Hash): simple, but if the server dies, the session dies with it

- Redis Session Store: every server queries Redis. Scalable and resilient.

- JWT Stateless: no state on the server. Best for APIs and SPAs.

For modern APIs, JWT is almost always the right answer. For traditional web apps, Redis Session Store.

Cloud Load Balancers

On AWS, the choice tree is short:

- ALB (Application): for HTTP/HTTPS. Routing by URL, header, host. Default pick.

- NLB (Network): for TCP/UDP. Ultra-low latency. Use for databases or non-HTTP protocols.

- CLB (Classic): deprecated. Don't use it.

The cloud advantage: integrated auto-scaling, automatic multi-AZ, zero infrastructure to babysit.

PM2 Cluster Mode: In-Process Load Balancing

Before adding more servers, squeeze every CPU core out of the one you have with PM2:

This spins up one worker per CPU core with automatic load balancing across them.

FAQ

Isn't the load balancer itself a SPOF? Yes, if you run just one. In production, you run the LB as a redundant pair (active/passive with VRRP, or active/active behind Anycast). Cloud LBs like ALB and NLB are already multi-AZ by default. Never put a single Nginx box in front of a fleet and call it a day.

Do I still need sticky sessions if I use JWT? No, and that's the whole point. JWT carries the auth state in the token itself, so any server can validate the request. Sticky sessions only matter when the server holds in-memory state. If you're on JWT and still flipping sticky sessions on, you're hurting your load distribution for no reason.

ALB or Cloudflare in front: which one? Both, usually. Cloudflare handles DDoS, WAF, caching, and Anycast edge routing. ALB handles application-level routing inside your VPC. Cloudflare is the bouncer at the door. ALB is the host inside the club deciding which room you go to.

Kubernetes Service vs Ingress: what's the difference? A Service load balances inside the cluster (Layer 4, usually ClusterIP or LoadBalancer). An Ingress is Layer 7, sits at the edge, and routes external HTTP traffic to Services based on host and path. You almost always want both: Ingress for north-south traffic, Service for east-west.

Does round robin break WebSocket connections? The initial handshake gets distributed fine, but once established, WebSockets are long-lived and pinned to that server. The real problem is broadcasting: if user A is on server 1 and user B is on server 2, how does A's message reach B? Answer: a pub/sub backplane like Redis or NATS. For the balancing itself, use Least Connections instead of Round Robin, so you don't pile long-lived sockets onto one unlucky box.

Key Takeaways

Load balancing is the foundation of horizontal scalability. Start with PM2 cluster mode on a single machine. When you outgrow that, add servers and put Nginx or an ALB in front.

Algorithm: Least Connections for most scenarios. Health checks are mandatory. And for sessions, use JWT or Redis. Never rely on sticky sessions in serious production environments.