Reverse Proxy Demystified: Nginx, Caddy, and Traefik in Practice

A practical guide to Reverse Proxies: understand the differences between Nginx, Caddy, and Traefik, with configuration examples for SSL, caching, load balancing, and more.

Thiago Saraiva

Everyone says you "need an Nginx in front of your application," but few explain why. And when you sit down to set one up, you find 15 different tools and zero consensus on which to pick. Let's fix that.

What Is a Reverse Proxy?

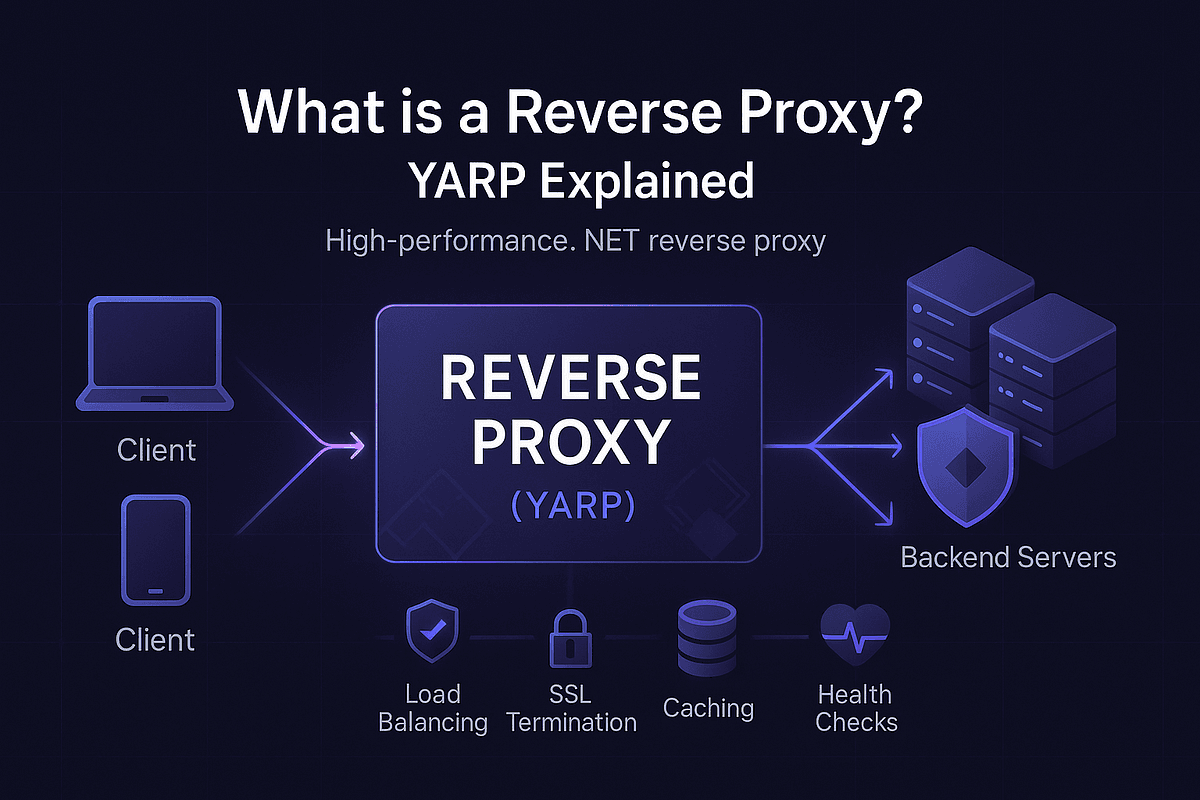

A reverse proxy is an intermediary server that sits between the client and your backend. The client thinks it's talking directly to the server, but it's actually going through a middleman that decides what to do with the request.

Client --> Internet --> Reverse Proxy --> Backend Server(s)

Don't confuse it with a forward proxy (like a VPN), which sits on the client side. The reverse proxy sits on the server side.

Mental model: Think of a reverse proxy as the receptionist at a fancy hotel. Guests (your backends) never pick up outside calls directly. The receptionist answers, checks who's calling, logs the call, routes it to the right room, says "not available" when needed, or even handles simple questions herself without bothering anyone upstairs. Same job, same value.

Why Use One?

The 5 reasons that justify putting a reverse proxy in front of your application:

- SSL Termination: centralizes HTTPS certificates in one place

- Load Balancing: distributes traffic across multiple backends

- Caching: stores responses and reduces server load

- Security: hides your infrastructure, blocks direct attacks

- Compression: compresses responses (gzip, brotli) before sending

When You DON'T Need a Reverse Proxy

Let's be honest: not every setup deserves one.

- Local development: your

npm run devdoesn't need Nginx in front. Stop cosplaying production. - Internal CLI services: a cron job hitting an internal API over the VPC is fine without a middleman.

- Tiny internal tools: the dashboard only 3 people on the LAN use? Skip it.

- Serverless/managed platforms: Vercel, Cloud Run, and friends already put a proxy in front. Don't add a second one.

If the traffic never leaves your machine or your private network, the receptionist is just extra overhead.

Nginx: The Classic That Never Fails

Nginx (stable 1.26.x as of 2026) is the most widely used reverse proxy in the world. Extreme performance, battle-tested, packed with advanced features.

Basic reverse proxy configuration:

For SSL with HTTP redirect:

And to serve a SPA (React/Vue) with fallback:

Caddy: Automatic HTTPS and Zero Config

Caddy (v2.8+ in 2026) is the modern option for those who don't want to suffer. HTTPS is automatic via Let's Encrypt or ZeroSSL.

The same Nginx setup, in Caddy:

example.com {

reverse_proxy localhost:3000

}Seriously. That's it. Caddy handles the certificate, renewal, HTTP to HTTPS redirect, all of it.

For more complex scenarios:

example.com {

handle /api/* {

reverse_proxy localhost:3000

}

handle /ws {

reverse_proxy localhost:3001

}

handle {

root * /var/www/html

try_files {path} /index.html

file_server

}

encode gzip

}Traefik: Built for Containers

If you use Docker or Kubernetes, Traefik (v3.x in 2026) is the natural choice. It discovers services automatically via Docker labels:

Spun up a new container? Traefik detects it and configures routing automatically. Removed it? Traefik drops it from the config. Particularly powerful in microservices environments.

War Story: The Day the Proxy Saved (or Killed) Production

GitHub's engineering blog has documented their long-running use of HAProxy for the Git load balancing tier, including the 2016 deep dive on how they handle graceful HAProxy reloads at scale without dropping connections. They needed smarter health checks and finer connection control than raw backends could give them, and the proxy layer was the only place to solve it cleanly. Lesson: your reverse proxy becomes the lever you pull when raw backends can't bend.

The other side of the coin: a team I mentored once exposed their Node app directly on port 443, "to keep things simple." One botched deploy took the TLS listener down and the whole domain went dark, including the status page (also on the same box). A reverse proxy in front would have kept serving a maintenance page and stale /status from cache. They learned the expensive way that the receptionist also answers the phone when the guests are asleep.

Which One Should You Choose?

My direct recommendation:

- Small/medium project: Caddy. Zero setup, automatic HTTPS.

- High-traffic production: Nginx. Extreme performance, battle-tested.

- Microservices with Docker/K8s: Traefik. Auto-discovery, label-based config.

- Pure load balancing: HAProxy. Still the king for raw LB throughput.

Caching at the Proxy: Impressive Results

A technique few people use but that yields incredible results: micro-caching. Just 1 to 5 seconds of caching in Nginx:

Result: 1000 req/s hitting the backend becomes 1 to 5 req/s. For read-heavy endpoints, it's almost magical.

Security Quick Wins at the Proxy Layer

The proxy is the cheapest place to fix a dozen security issues at once. Do it once, protect every backend behind it:

- Hide the server header:

server_tokens off;in Nginx. Don't advertise your version to scanners. - Rate limit aggressively:

limit_req_zone $binary_remote_addr zone=api:10m rate=10r/s;thenlimit_req zone=api burst=20 nodelay;on sensitive endpoints. - Block bad User-Agents:

if ($http_user_agent ~* (nikto|sqlmap|nmap|masscan)) { return 403; }. Free 403s for script kiddies. - Centralize security headers:

Strict-Transport-Security,Content-Security-Policy,X-Content-Type-Options: nosniff,Referrer-Policy. Set once at the proxy, never worry about each app getting it right. - Cap body size:

client_max_body_size 10m;stops trivial DoS via huge uploads.

Express Behind a Proxy: Don't Forget trust proxy

If you use Express.js, this is essential:

Without trust proxy, your rate limiter will throttle every request as if it came from the same IP (the proxy). One bad actor, and everyone's locked out.

FAQ

1. Does a Kubernetes Ingress replace a reverse proxy? Kind of. An Ingress controller (Nginx Ingress, Traefik, Istio Gateway) IS a reverse proxy, just wrapped in Kubernetes abstractions. You don't need a second one inside the cluster. You might still want one outside (cloud LB, Cloudflare) for edge concerns like WAF and global anycast.

2. Nginx vs OpenResty, what's the difference? OpenResty is Nginx plus a bundled LuaJIT runtime and a pile of useful modules. If you need custom logic at the proxy (dynamic routing, auth, rewrites with real code), OpenResty saves you from compiling Nginx modules by hand. Plain Nginx covers 90% of cases.

3. What configs do I need for WebSocket pass-through?

Three lines matter in Nginx: proxy_http_version 1.1;, proxy_set_header Upgrade $http_upgrade;, proxy_set_header Connection "upgrade";. Also raise proxy_read_timeout to something like 3600s, otherwise idle sockets get killed. Caddy and Traefik handle this automatically.

4. Do sticky sessions still matter in 2026?

Less than before, but yes, in specific cases. If you've done your homework and moved session state to Redis or JWT, you don't need them. If you're dealing with legacy apps, WebSocket servers holding in-memory state, or SSE connections, sticky sessions (via ip_hash in Nginx or cookie-based in HAProxy/Traefik) are still the pragmatic fix.

5. My load test breaks and I think it's keep-alive. What's happening?

Almost always one of two things: (a) you didn't configure upstream with keepalive 32; in Nginx, so every request opens a new TCP+TLS connection to the backend and you're measuring handshake cost; or (b) your load tester is reusing connections but the proxy's keepalive_timeout is too low and connections die mid-test. Tune both sides, then re-run.

Key Takeaways

A reverse proxy is not optional in production. Even with a single backend, SSL termination, security headers, and compression alone justify it.

Start simple: Caddy for new projects, Nginx if you already know it. Add caching when performance demands it. And if you live in containers, Traefik will save you hours of YAML.